Auto Chasing Turtle

By Noritsuna Imamura

By autonomous control, this robot recognizes a human's face and chases to the recognized human.

Type: Social Impact, Artistic

Website: https://www.siprop.org/en/2.0/index.php?product%2FAutoChasingTurtle

What inspired you or what is the idea that got you started?

This product was developed in 2011.

At that time, it was popular to connect Kinect to a PC to create VR applications.

However, since it used a PC, it required a power supply and the system was very large.

That made it difficult to create applications that were mobile and highly interactive.

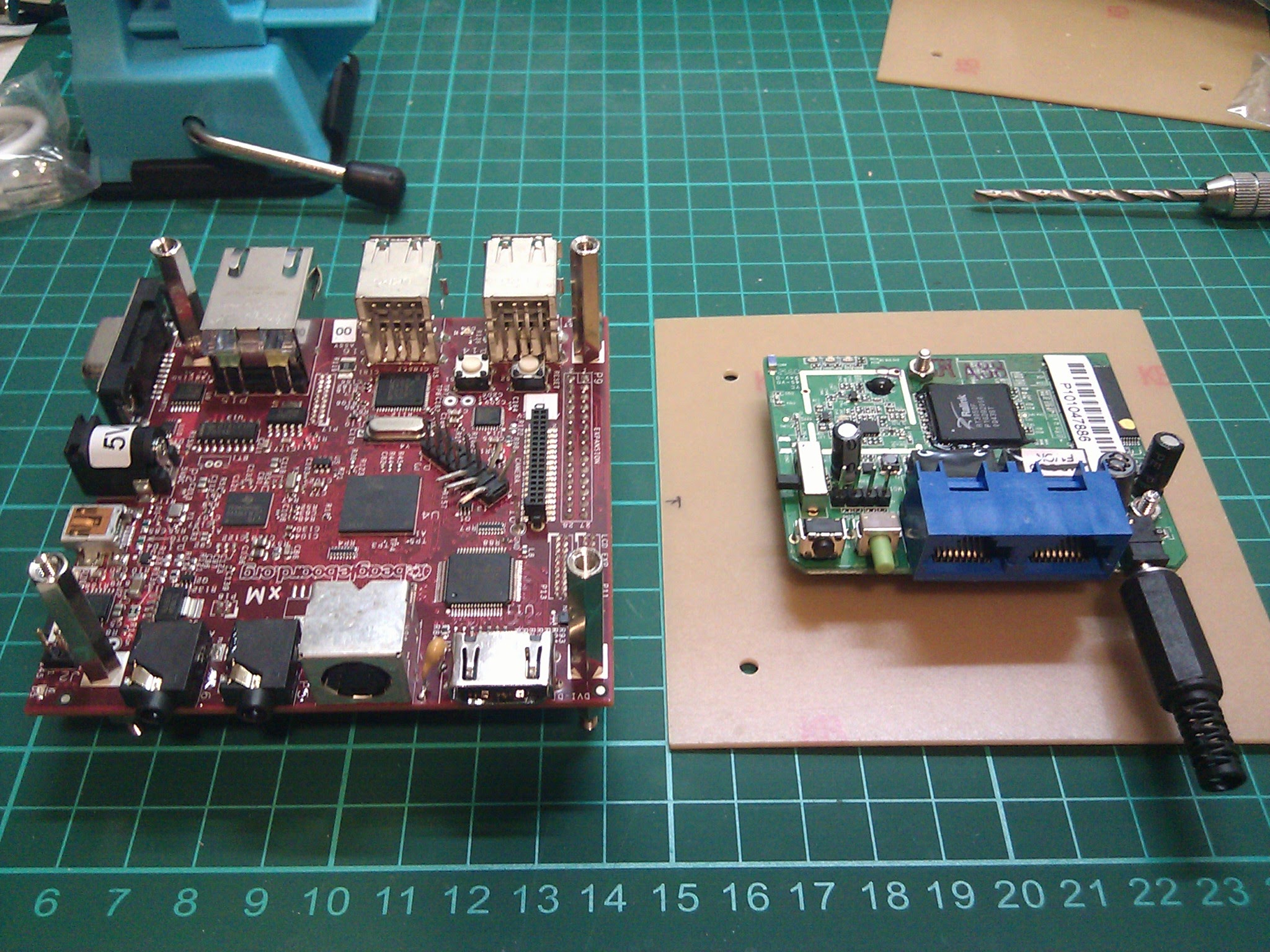

So we ported Google Android and Kinect to the Beagle Board (no Raspberry Pi yet), a small ARM-based computer that was just starting to come out at that time.

This made the platform highly mobile and interactive and allowed us to create VR/AR/MR applications at will.

This product is an application that uses that platform.

What is your project about and how does it work?

This product is an "Auto Chasing Turtle".

By autonomous control, this robot recognizes a human's face and chases the recognized human.

1. Rotate and look for the human who is a chasing target.

2. Try to recognize the human of the face by RGB camera of Kinect.

3. If it can recognize as the human, it calculates the course to the recognized human and changes to course of the recognized human.

4. Calculate the distance to the recognized human by depth camera of Kinect.

5. Chase to the recognized human.

6. 2-5 are repeated. And if losts the recognized human, returns to 1.

7. You can watch the view of the robot on iPad.

What did you learn by doing this project?

This product was established by using the results of various people and organizations, including Google Android.

I learned that it is possible to achieve great results by collaborating with others in this way, even if at first glance it may seem too advanced and difficult to develop by oneself.

This is exactly the spirit of Open Source, MAKE:, and "Do It with Others," and I believe that I was able to touch a part of the essence of these.

What impact does your project have on others as well as yourself?

After this product, more and more mobile-based products such as Google Glass were launched.

We believe that the path we paved for mobility and interactivity with ARM devices may have influenced these products.